# Neptune + Optuna integration

Neptune is a lightweight experiment tracker that offers a single place to track, compare, store, and collaborate on experiments and models.

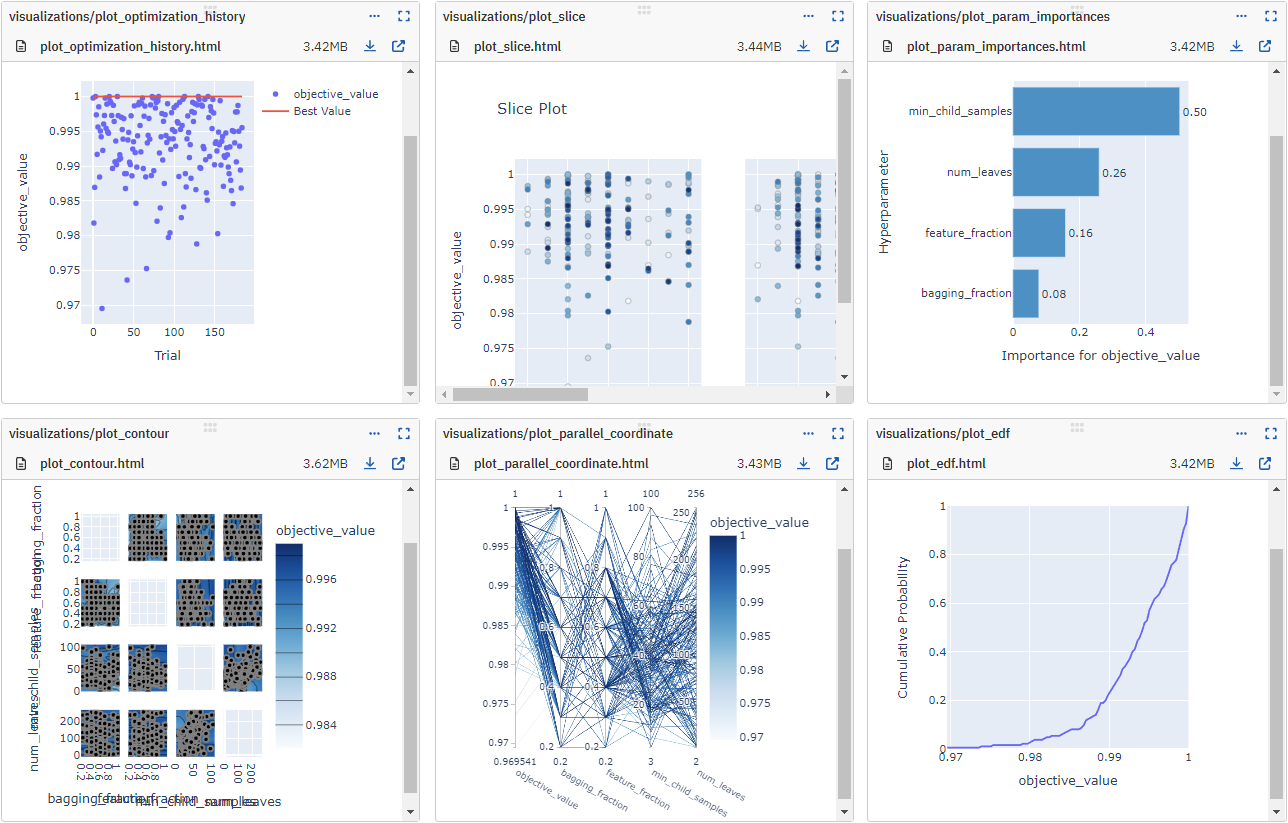

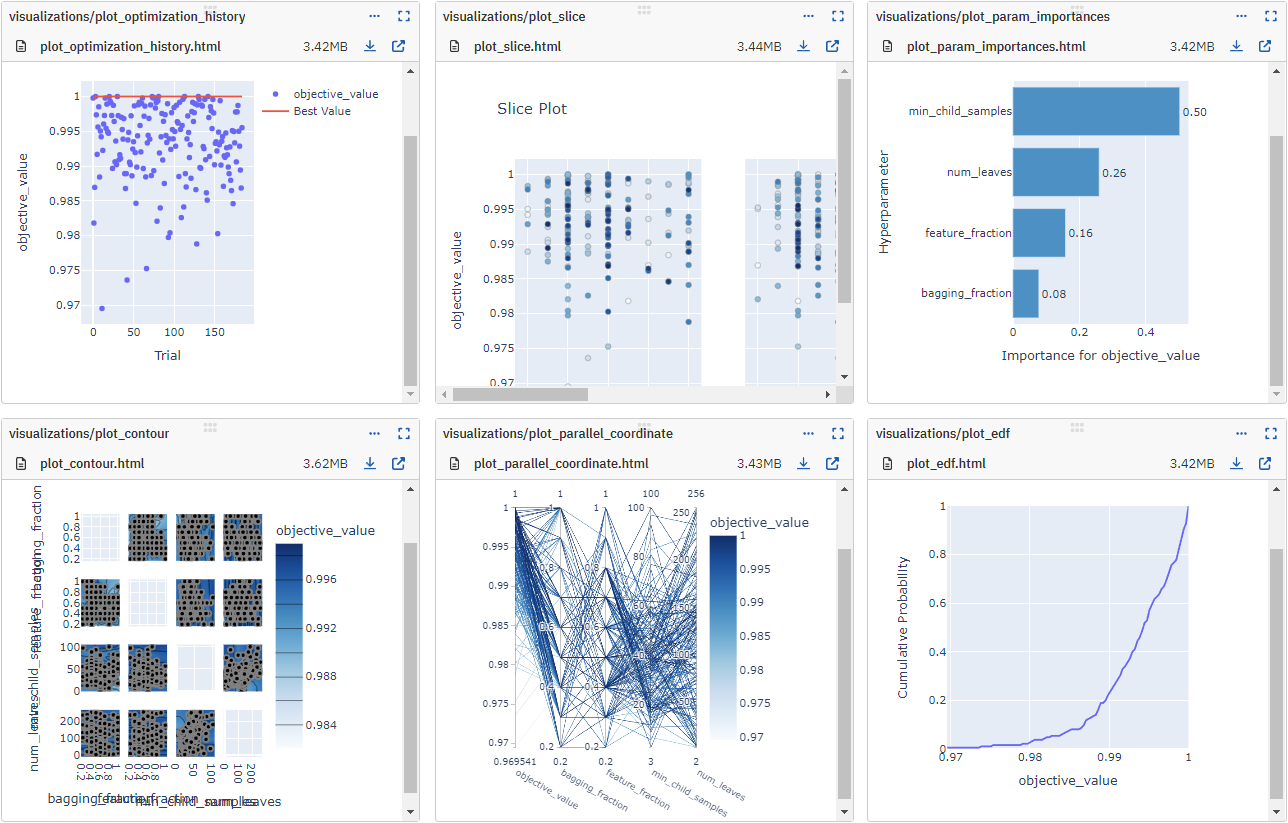

This integration lets you use it as an Optuna visualization dashboard to log and monitor hyperparameter sweeps live.

## What will you get with this integration?

* Log and monitor the Optuna hyperparameter sweep live:

* values and params for each Trial

* best values and params for the Study

* hardware consumption and console logs

* interactive plots from the optuna.visualization module

* parameter distributions for each Trial

* Study object itself for 'InMemoryStorage' or the database location for the Studies with database storage

* Load the Study directly from the existing Neptune run

## Resources

* [Documentation](https://docs.neptune.ai/integrations/optuna)

* [Code example on GitHub](https://github.com/neptune-ai/examples/blob/main/integrations-and-supported-tools/optuna/scripts)

* [Run logged in the Neptune app](https://app.neptune.ai/o/common/org/optuna-integration/runs/details?viewId=b6190a29-91be-4e64-880a-8f6085a6bb78&detailsTab=dashboard&dashboardId=Vizualizations-5ea92658-6a56-4656-b225-e81c6fbfc8ab&shortId=NEP1-18517&type=run)

* [Run example in Google Colab](https://colab.research.google.com/github/neptune-ai/examples/blob/master/integrations-and-supported-tools/optuna/notebooks/Neptune_Optuna_integration.ipynb)

## Example

On the command line:

```

pip install neptune-optuna

```

In Python:

```python

import neptune

import neptune.integrations.optuna as npt_utils

# Start a run

run = neptune.init_run(

api_token=neptune.ANONYMOUS_API_TOKEN,

project="common/optuna-integration",

)

# Create a NeptuneCallback instance

neptune_callback = npt_utils.NeptuneCallback(run)

# Pass the callback to study.optimize()

study = optuna.create_study(direction="maximize")

study.optimize(objective, n_trials=100, callbacks=[neptune_callback])

# Watch the optimization live in Neptune

```

## Support

If you got stuck or simply want to talk to us, here are your options:

* Check our [FAQ page](https://docs.neptune.ai/getting_help)

* You can submit bug reports, feature requests, or contributions directly to the repository.

* Chat! When in the Neptune application click on the blue message icon in the bottom-right corner and send a message. A real person will talk to you ASAP (typically very ASAP),

* You can just shoot us an email at support@neptune.ai

Raw data

{

"_id": null,

"home_page": "https://neptune.ai/",

"name": "neptune-optuna",

"maintainer": null,

"docs_url": null,

"requires_python": "<4.0,>=3.7",

"maintainer_email": null,

"keywords": "MLOps, ML Experiment Tracking, ML Model Registry, ML Model Store, ML Metadata Store",

"author": "neptune.ai",

"author_email": "contact@neptune.ai",

"download_url": "https://files.pythonhosted.org/packages/66/78/7b945c569f3712c8e95a40c7a90e047dd8a2e155aeefc352067e5d40eb4e/neptune_optuna-1.4.1.tar.gz",

"platform": null,

"description": "# Neptune + Optuna integration\n\nNeptune is a lightweight experiment tracker that offers a single place to track, compare, store, and collaborate on experiments and models.\n\nThis integration lets you use it as an Optuna visualization dashboard to log and monitor hyperparameter sweeps live.\n\n## What will you get with this integration?\n\n* Log and monitor the Optuna hyperparameter sweep live:\n * values and params for each Trial\n * best values and params for the Study\n * hardware consumption and console logs\n * interactive plots from the optuna.visualization module\n * parameter distributions for each Trial\n * Study object itself for 'InMemoryStorage' or the database location for the Studies with database storage\n* Load the Study directly from the existing Neptune run\n\n\n\n## Resources\n\n* [Documentation](https://docs.neptune.ai/integrations/optuna)\n* [Code example on GitHub](https://github.com/neptune-ai/examples/blob/main/integrations-and-supported-tools/optuna/scripts)\n* [Run logged in the Neptune app](https://app.neptune.ai/o/common/org/optuna-integration/runs/details?viewId=b6190a29-91be-4e64-880a-8f6085a6bb78&detailsTab=dashboard&dashboardId=Vizualizations-5ea92658-6a56-4656-b225-e81c6fbfc8ab&shortId=NEP1-18517&type=run)\n* [Run example in Google Colab](https://colab.research.google.com/github/neptune-ai/examples/blob/master/integrations-and-supported-tools/optuna/notebooks/Neptune_Optuna_integration.ipynb)\n\n## Example\n\nOn the command line:\n\n```\npip install neptune-optuna\n```\n\nIn Python:\n\n```python\nimport neptune\nimport neptune.integrations.optuna as npt_utils\n\n# Start a run\nrun = neptune.init_run(\n api_token=neptune.ANONYMOUS_API_TOKEN,\n project=\"common/optuna-integration\",\n)\n\n# Create a NeptuneCallback instance\nneptune_callback = npt_utils.NeptuneCallback(run)\n\n# Pass the callback to study.optimize()\nstudy = optuna.create_study(direction=\"maximize\")\nstudy.optimize(objective, n_trials=100, callbacks=[neptune_callback])\n\n# Watch the optimization live in Neptune\n```\n\n## Support\n\nIf you got stuck or simply want to talk to us, here are your options:\n\n* Check our [FAQ page](https://docs.neptune.ai/getting_help)\n* You can submit bug reports, feature requests, or contributions directly to the repository.\n* Chat! When in the Neptune application click on the blue message icon in the bottom-right corner and send a message. A real person will talk to you ASAP (typically very ASAP),\n* You can just shoot us an email at support@neptune.ai\n\n",

"bugtrack_url": null,

"license": "Apache-2.0",

"summary": "Neptune.ai Optuna integration library",

"version": "1.4.1",

"project_urls": {

"Documentation": "https://docs.neptune.ai/integrations/optuna/",

"Homepage": "https://neptune.ai/",

"Repository": "https://github.com/neptune-ai/neptune-optuna",

"Tracker": "https://github.com/neptune-ai/neptune-optuna/issues"

},

"split_keywords": [

"mlops",

" ml experiment tracking",

" ml model registry",

" ml model store",

" ml metadata store"

],

"urls": [

{

"comment_text": "",

"digests": {

"blake2b_256": "12c200adfd18452e2fe451c3049f5423379ffaf71a4056919e8fe8908467eab5",

"md5": "8950c1b5a33f30d8ea55c91a8efd142c",

"sha256": "569509eafaae9ecac060cce4925f0c4bc5af71fc3e4d536ec0c2da4810b1efdb"

},

"downloads": -1,

"filename": "neptune_optuna-1.4.1-py3-none-any.whl",

"has_sig": false,

"md5_digest": "8950c1b5a33f30d8ea55c91a8efd142c",

"packagetype": "bdist_wheel",

"python_version": "py3",

"requires_python": "<4.0,>=3.7",

"size": 14396,

"upload_time": "2024-11-07T12:04:50",

"upload_time_iso_8601": "2024-11-07T12:04:50.187042Z",

"url": "https://files.pythonhosted.org/packages/12/c2/00adfd18452e2fe451c3049f5423379ffaf71a4056919e8fe8908467eab5/neptune_optuna-1.4.1-py3-none-any.whl",

"yanked": false,

"yanked_reason": null

},

{

"comment_text": "",

"digests": {

"blake2b_256": "66787b945c569f3712c8e95a40c7a90e047dd8a2e155aeefc352067e5d40eb4e",

"md5": "43258c80768db09cf9fabf7f7ad1873a",

"sha256": "ca8886eff35986a33650de7091cacd7caa8d5602c2bf22f5a3478c2915a53453"

},

"downloads": -1,

"filename": "neptune_optuna-1.4.1.tar.gz",

"has_sig": false,

"md5_digest": "43258c80768db09cf9fabf7f7ad1873a",

"packagetype": "sdist",

"python_version": "source",

"requires_python": "<4.0,>=3.7",

"size": 14123,

"upload_time": "2024-11-07T12:04:51",

"upload_time_iso_8601": "2024-11-07T12:04:51.591405Z",

"url": "https://files.pythonhosted.org/packages/66/78/7b945c569f3712c8e95a40c7a90e047dd8a2e155aeefc352067e5d40eb4e/neptune_optuna-1.4.1.tar.gz",

"yanked": false,

"yanked_reason": null

}

],

"upload_time": "2024-11-07 12:04:51",

"github": true,

"gitlab": false,

"bitbucket": false,

"codeberg": false,

"github_user": "neptune-ai",

"github_project": "neptune-optuna",

"travis_ci": false,

"coveralls": false,

"github_actions": true,

"lcname": "neptune-optuna"

}