| Name | sne4onnx JSON |

| Version |

1.0.12

JSON

JSON |

| download |

| home_page | https://github.com/PINTO0309/sne4onnx |

| Summary | A very simple tool for situations where optimization with onnx-simplifier would exceed the Protocol Buffers upper file size limit of 2GB, or simply to separate onnx files to any size you want. Simple Network Extraction for ONNX. |

| upload_time | 2024-04-30 05:10:55 |

| maintainer | None |

| docs_url | None |

| author | Katsuya Hyodo |

| requires_python | >=3.6 |

| license | MIT License |

| keywords |

|

| VCS |

|

| bugtrack_url |

|

| requirements |

No requirements were recorded.

|

| Travis-CI |

No Travis.

|

| coveralls test coverage |

No coveralls.

|

# sne4onnx

A very simple tool for situations where optimization with onnx-simplifier would exceed the Protocol Buffers upper file size limit of 2GB, or simply to separate onnx files to any size you want. **S**imple **N**etwork **E**xtraction for **ONNX**.

https://github.com/PINTO0309/simple-onnx-processing-tools

[](https://pepy.tech/project/sne4onnx)  [](https://pypi.org/project/sne4onnx/) [](https://github.com/PINTO0309/sne4onnx/actions?query=workflow%3ACodeQL)

<p align="center">

<img src="https://user-images.githubusercontent.com/33194443/170151483-f99b2b70-9b69-48b7-8690-0ddfa8fb8989.png" />

</p>

# Key concept

- [x] If INPUT OP name and OUTPUT OP name are specified, the onnx graph within the range of the specified OP name is extracted and .onnx is generated.

- [x] I do not use `onnx.utils.extractor.extract_model` because it is very slow and I implement my own model separation logic.

## 1. Setup

### 1-1. HostPC

```bash

### option

$ echo export PATH="~/.local/bin:$PATH" >> ~/.bashrc \

&& source ~/.bashrc

### run

$ pip install -U onnx \

&& python3 -m pip install -U onnx_graphsurgeon --index-url https://pypi.ngc.nvidia.com

&& pip install -U sne4onnx

```

### 1-2. Docker

https://github.com/PINTO0309/simple-onnx-processing-tools#docker

## 2. CLI Usage

```bash

$ sne4onnx -h

usage:

sne4onnx [-h]

-if INPUT_ONNX_FILE_PATH

-ion INPUT_OP_NAMES

-oon OUTPUT_OP_NAMES

[-of OUTPUT_ONNX_FILE_PATH]

[-n]

optional arguments:

-h, --help

show this help message and exit

-if INPUT_ONNX_FILE_PATH, --input_onnx_file_path INPUT_ONNX_FILE_PATH

Input onnx file path.

-ion INPUT_OP_NAMES [INPUT_OP_NAMES ...], --input_op_names INPUT_OP_NAMES [INPUT_OP_NAMES ...]

List of OP names to specify for the input layer of the model.

e.g. --input_op_names aaa bbb ccc

-oon OUTPUT_OP_NAMES [OUTPUT_OP_NAMES ...], --output_op_names OUTPUT_OP_NAMES [OUTPUT_OP_NAMES ...]

List of OP names to specify for the output layer of the model.

e.g. --output_op_names ddd eee fff

-of OUTPUT_ONNX_FILE_PATH, --output_onnx_file_path OUTPUT_ONNX_FILE_PATH

Output onnx file path. If not specified, extracted.onnx is output.

-n, --non_verbose

Do not show all information logs. Only error logs are displayed.

```

## 3. In-script Usage

```bash

$ python

>>> from sne4onnx import extraction

>>> help(extraction)

Help on function extraction in module sne4onnx.onnx_network_extraction:

extraction(

input_op_names: List[str],

output_op_names: List[str],

input_onnx_file_path: Union[str, NoneType] = '',

onnx_graph: Union[onnx.onnx_ml_pb2.ModelProto, NoneType] = None,

output_onnx_file_path: Union[str, NoneType] = '',

non_verbose: Optional[bool] = False

) -> onnx.onnx_ml_pb2.ModelProto

Parameters

----------

input_op_names: List[str]

List of OP names to specify for the input layer of the model.

e.g. ['aaa','bbb','ccc']

output_op_names: List[str]

List of OP names to specify for the output layer of the model.

e.g. ['ddd','eee','fff']

input_onnx_file_path: Optional[str]

Input onnx file path.

Either input_onnx_file_path or onnx_graph must be specified.

onnx_graph If specified, ignore input_onnx_file_path and process onnx_graph.

onnx_graph: Optional[onnx.ModelProto]

onnx.ModelProto.

Either input_onnx_file_path or onnx_graph must be specified.

onnx_graph If specified, ignore input_onnx_file_path and process onnx_graph.

output_onnx_file_path: Optional[str]

Output onnx file path.

If not specified, .onnx is not output.

Default: ''

non_verbose: Optional[bool]

Do not show all information logs. Only error logs are displayed.

Default: False

Returns

-------

extracted_graph: onnx.ModelProto

Extracted onnx ModelProto

```

## 4. CLI Execution

```bash

$ sne4onnx \

--input_onnx_file_path input.onnx \

--input_op_names aaa bbb ccc \

--output_op_names ddd eee fff \

--output_onnx_file_path output.onnx

```

## 5. In-script Execution

### 5-1. Use ONNX files

```python

from sne4onnx import extraction

extracted_graph = extraction(

input_op_names=['aaa','bbb','ccc'],

output_op_names=['ddd','eee','fff'],

input_onnx_file_path='input.onnx',

output_onnx_file_path='output.onnx',

)

```

### 5-2. Use onnx.ModelProto

```python

from sne4onnx import extraction

extracted_graph = extraction(

input_op_names=['aaa','bbb','ccc'],

output_op_names=['ddd','eee','fff'],

onnx_graph=graph,

output_onnx_file_path='output.onnx',

)

```

## 6. Samples

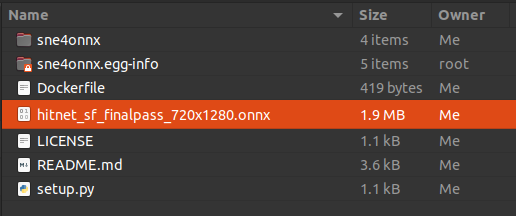

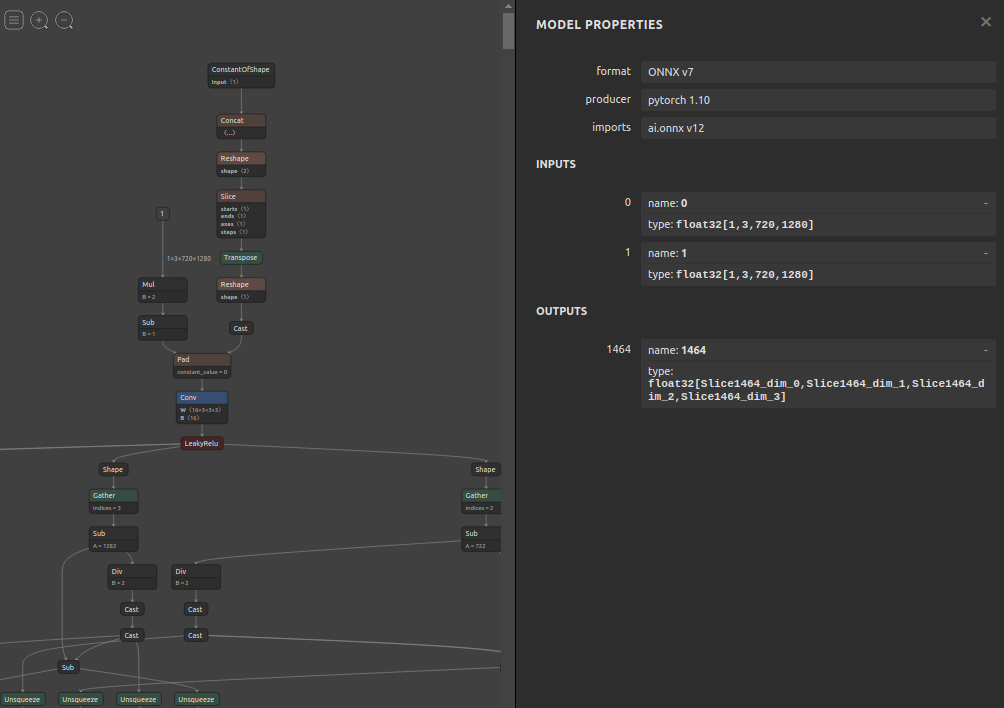

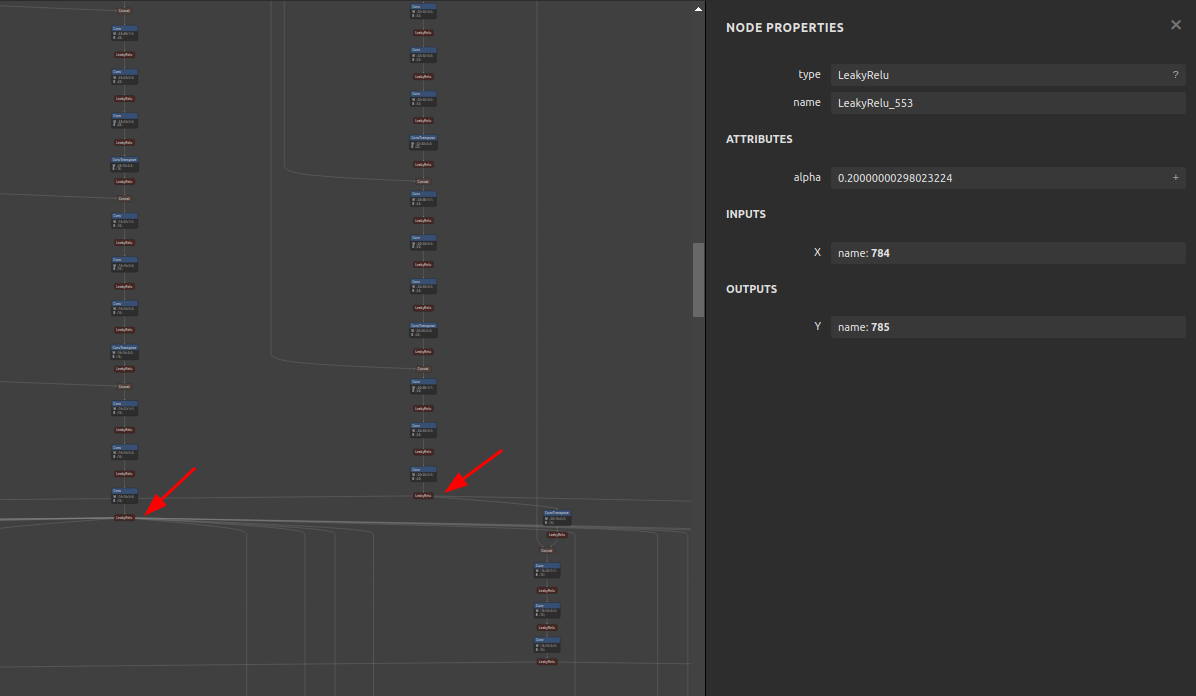

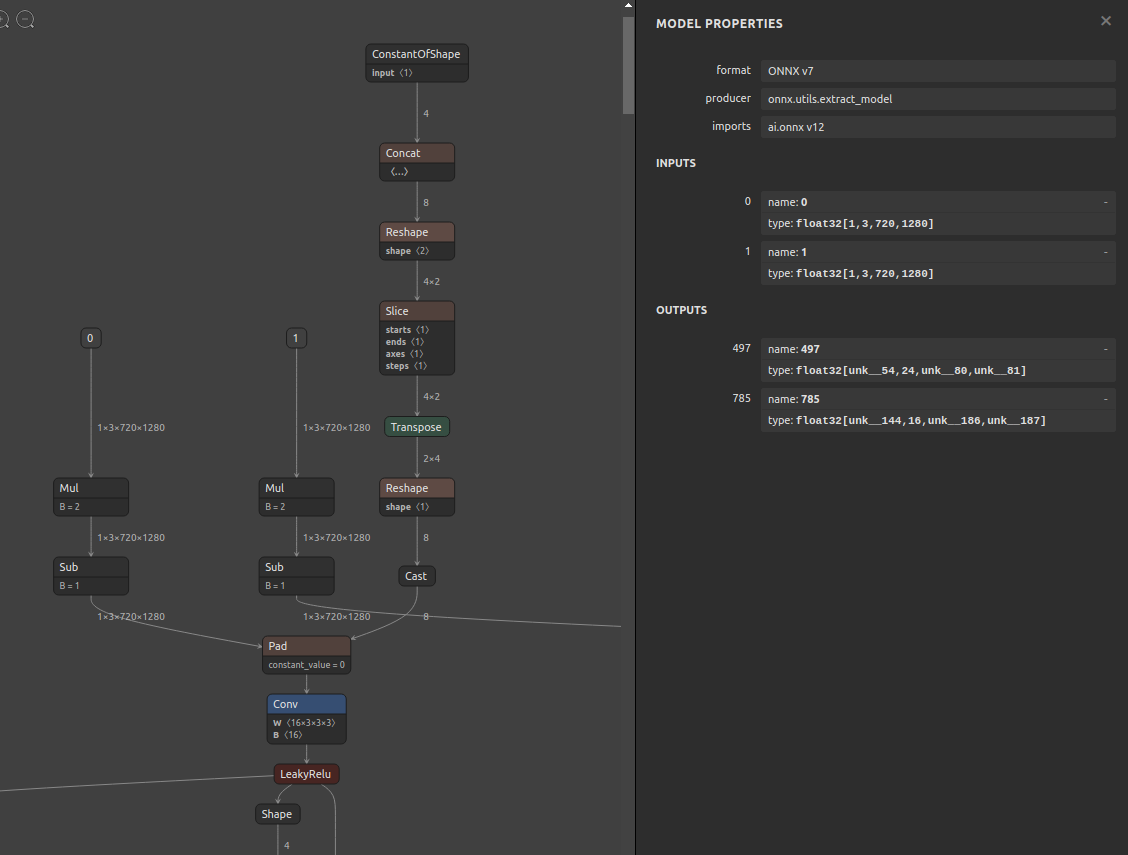

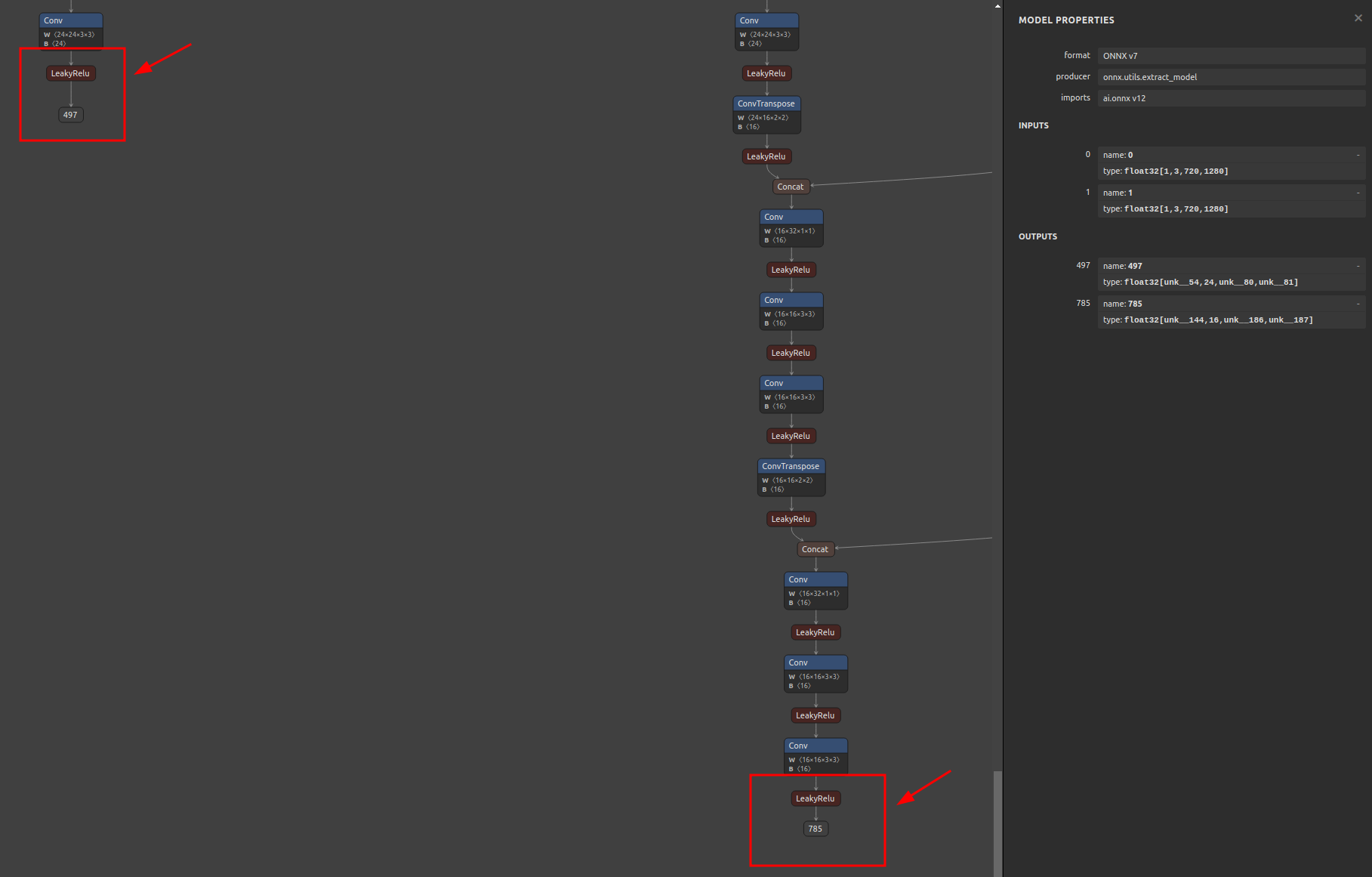

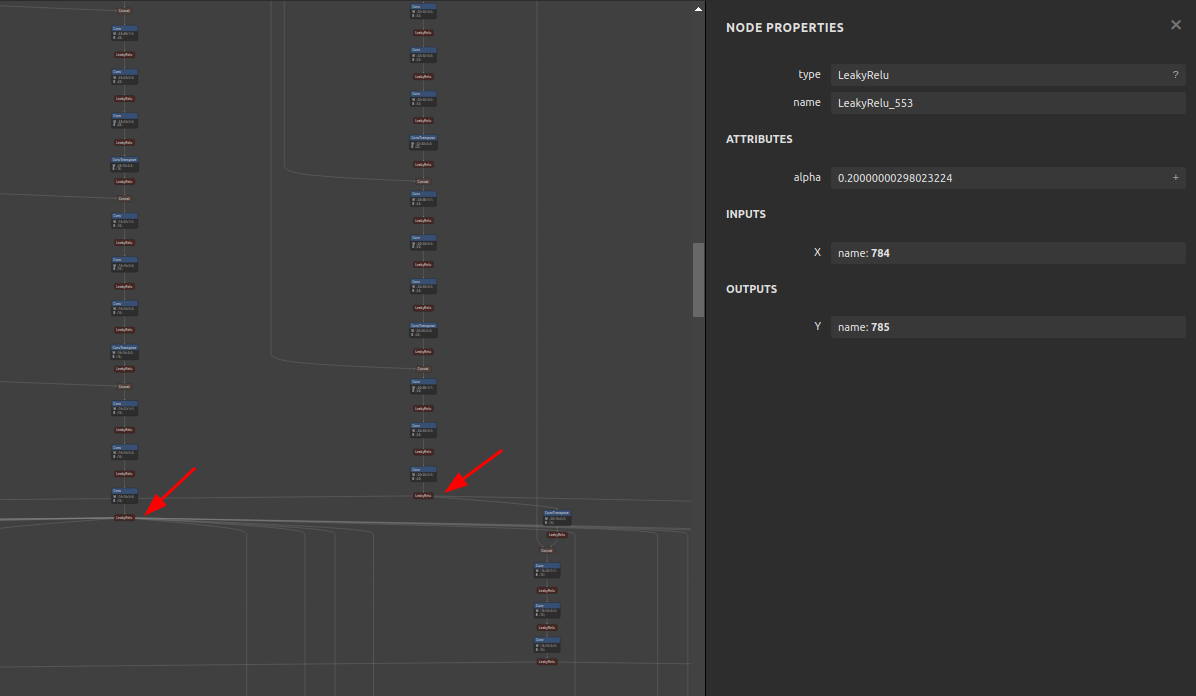

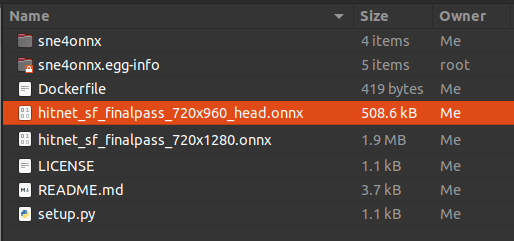

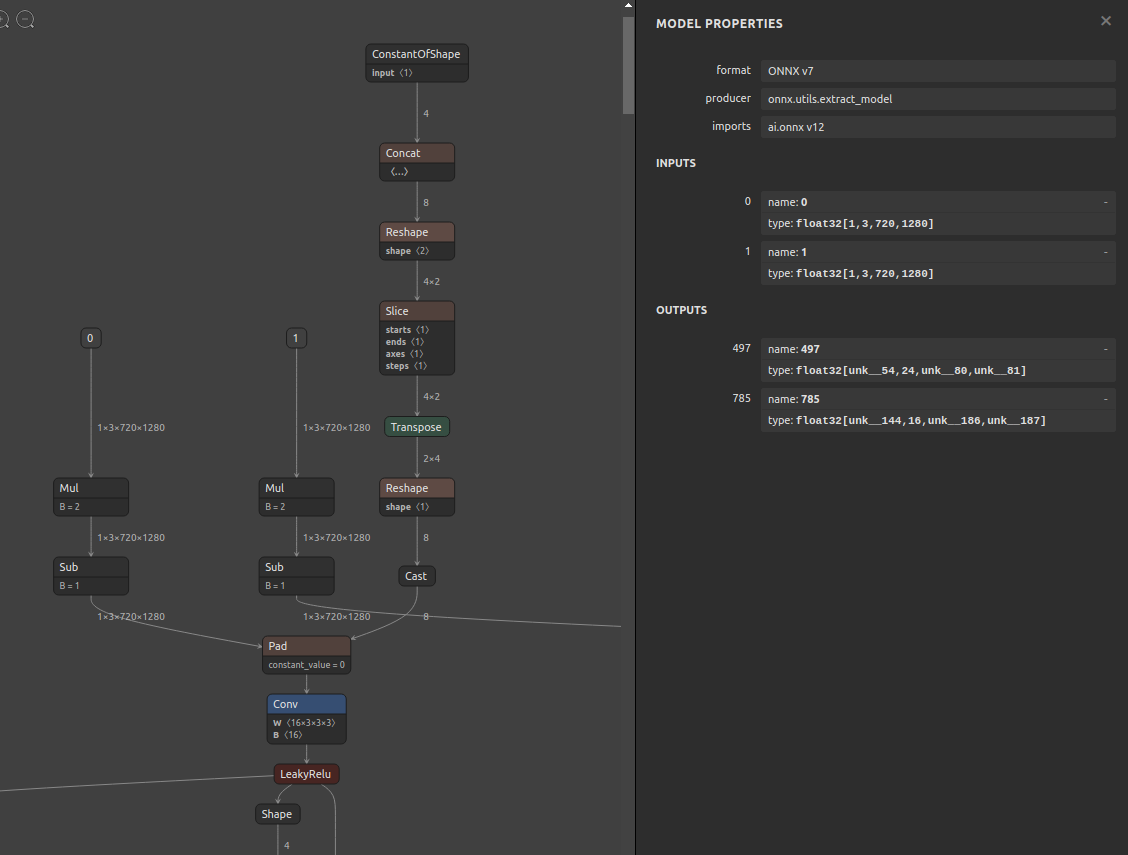

### 6-1. Pre-extraction

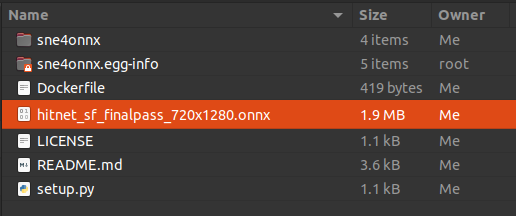

### 6-2. Extraction

```bash

$ sne4onnx \

--input_onnx_file_path hitnet_sf_finalpass_720x1280.onnx \

--input_op_names 0 1 \

--output_op_names 497 785 \

--output_onnx_file_path hitnet_sf_finalpass_720x960_head.onnx

```

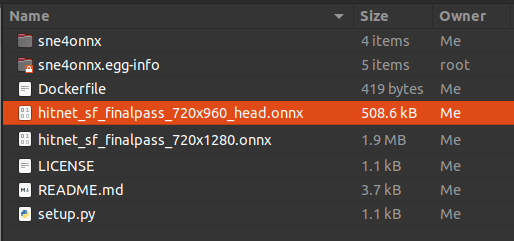

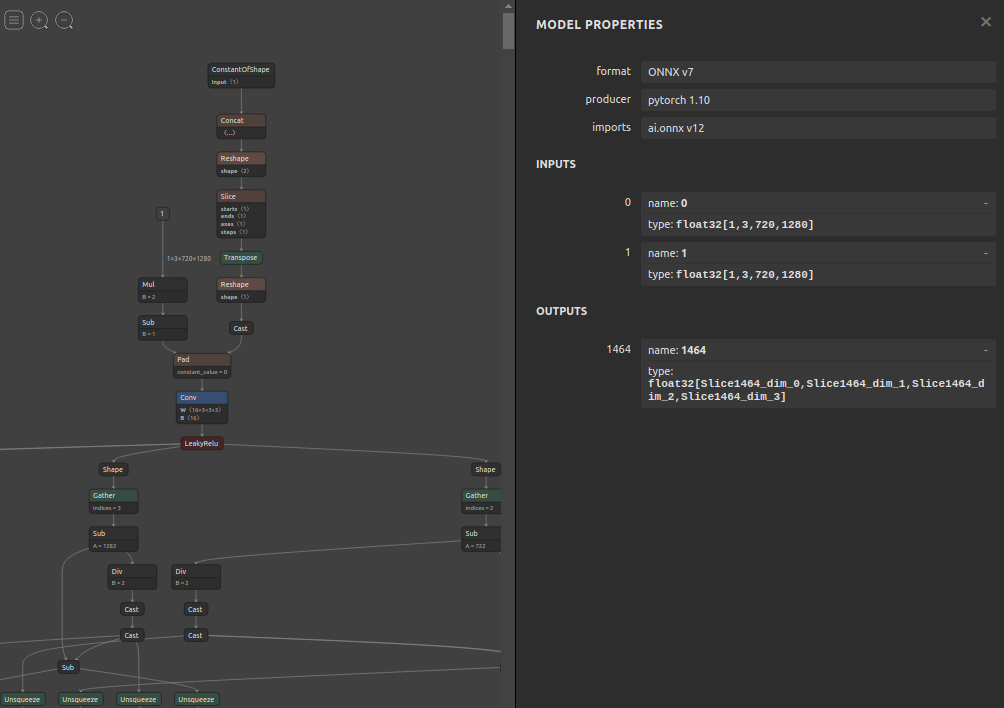

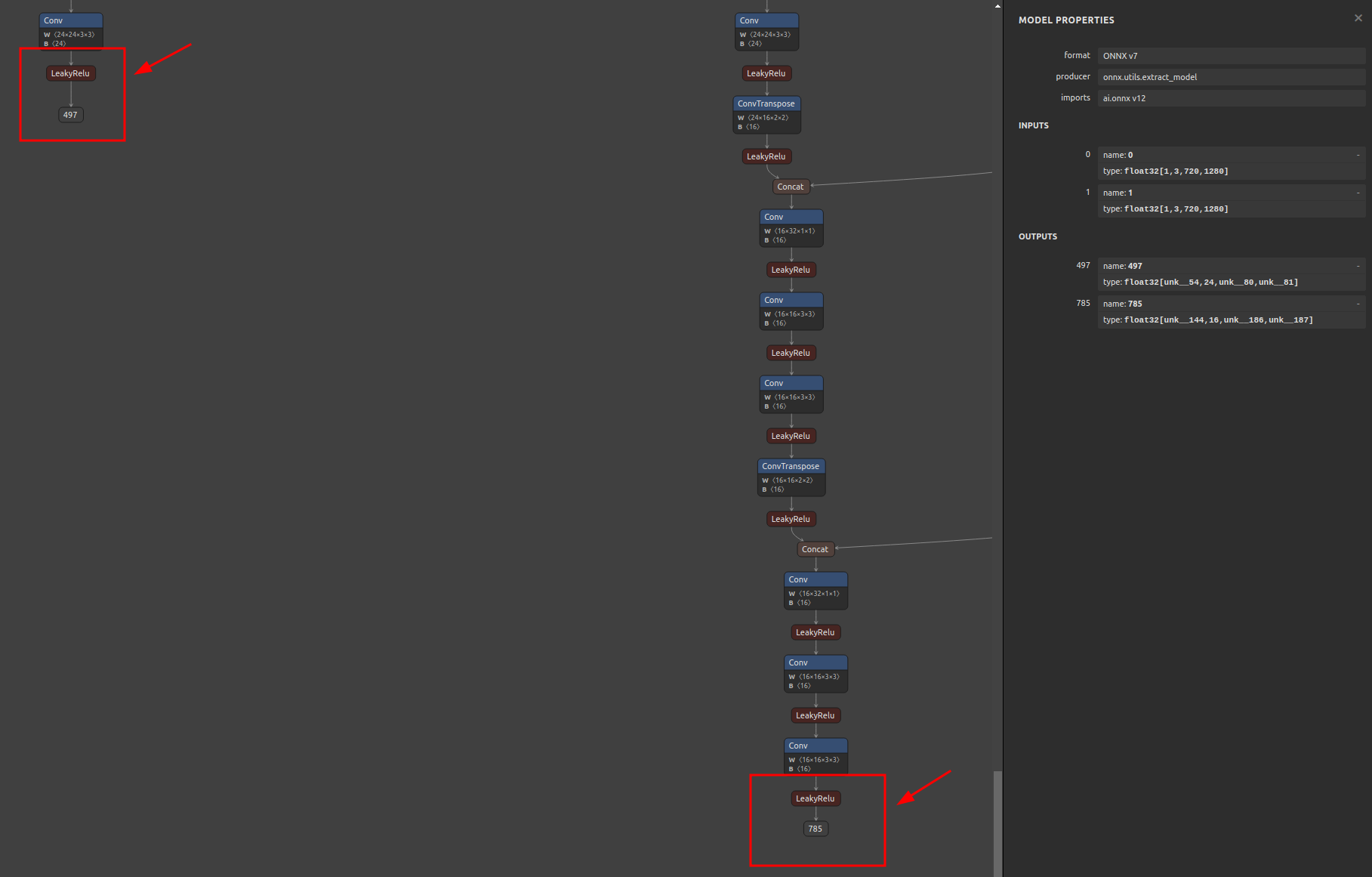

### 6-3. Extracted

## 7. Reference

1. https://github.com/onnx/onnx/blob/main/docs/PythonAPIOverview.md

2. https://docs.nvidia.com/deeplearning/tensorrt/onnx-graphsurgeon/docs/index.html

3. https://github.com/NVIDIA/TensorRT/tree/main/tools/onnx-graphsurgeon

4. https://github.com/PINTO0309/snd4onnx

5. https://github.com/PINTO0309/scs4onnx

6. https://github.com/PINTO0309/snc4onnx

7. https://github.com/PINTO0309/sog4onnx

8. https://github.com/PINTO0309/PINTO_model_zoo

## 8. Issues

https://github.com/PINTO0309/simple-onnx-processing-tools/issues

Raw data

{

"_id": null,

"home_page": "https://github.com/PINTO0309/sne4onnx",

"name": "sne4onnx",

"maintainer": null,

"docs_url": null,

"requires_python": ">=3.6",

"maintainer_email": null,

"keywords": null,

"author": "Katsuya Hyodo",

"author_email": "rmsdh122@yahoo.co.jp",

"download_url": "https://files.pythonhosted.org/packages/be/10/1ba8adb8aacd360f9bacc0d4425ed90803af78b35abd535e54991dc0af91/sne4onnx-1.0.12.tar.gz",

"platform": "linux",

"description": "# sne4onnx\nA very simple tool for situations where optimization with onnx-simplifier would exceed the Protocol Buffers upper file size limit of 2GB, or simply to separate onnx files to any size you want. **S**imple **N**etwork **E**xtraction for **ONNX**.\n\nhttps://github.com/PINTO0309/simple-onnx-processing-tools\n\n[](https://pepy.tech/project/sne4onnx)  [](https://pypi.org/project/sne4onnx/) [](https://github.com/PINTO0309/sne4onnx/actions?query=workflow%3ACodeQL)\n\n<p align=\"center\">\n <img src=\"https://user-images.githubusercontent.com/33194443/170151483-f99b2b70-9b69-48b7-8690-0ddfa8fb8989.png\" />\n</p>\n\n# Key concept\n- [x] If INPUT OP name and OUTPUT OP name are specified, the onnx graph within the range of the specified OP name is extracted and .onnx is generated.\n- [x] I do not use `onnx.utils.extractor.extract_model` because it is very slow and I implement my own model separation logic.\n\n## 1. Setup\n### 1-1. HostPC\n```bash\n### option\n$ echo export PATH=\"~/.local/bin:$PATH\" >> ~/.bashrc \\\n&& source ~/.bashrc\n\n### run\n$ pip install -U onnx \\\n&& python3 -m pip install -U onnx_graphsurgeon --index-url https://pypi.ngc.nvidia.com\n&& pip install -U sne4onnx\n```\n### 1-2. Docker\nhttps://github.com/PINTO0309/simple-onnx-processing-tools#docker\n\n## 2. CLI Usage\n```bash\n$ sne4onnx -h\n\nusage:\n sne4onnx [-h]\n -if INPUT_ONNX_FILE_PATH\n -ion INPUT_OP_NAMES\n -oon OUTPUT_OP_NAMES\n [-of OUTPUT_ONNX_FILE_PATH]\n [-n]\n\noptional arguments:\n -h, --help\n show this help message and exit\n\n -if INPUT_ONNX_FILE_PATH, --input_onnx_file_path INPUT_ONNX_FILE_PATH\n Input onnx file path.\n\n -ion INPUT_OP_NAMES [INPUT_OP_NAMES ...], --input_op_names INPUT_OP_NAMES [INPUT_OP_NAMES ...]\n List of OP names to specify for the input layer of the model.\n e.g. --input_op_names aaa bbb ccc\n\n -oon OUTPUT_OP_NAMES [OUTPUT_OP_NAMES ...], --output_op_names OUTPUT_OP_NAMES [OUTPUT_OP_NAMES ...]\n List of OP names to specify for the output layer of the model.\n e.g. --output_op_names ddd eee fff\n\n -of OUTPUT_ONNX_FILE_PATH, --output_onnx_file_path OUTPUT_ONNX_FILE_PATH\n Output onnx file path. If not specified, extracted.onnx is output.\n\n -n, --non_verbose\n Do not show all information logs. Only error logs are displayed.\n```\n\n## 3. In-script Usage\n```bash\n$ python\n>>> from sne4onnx import extraction\n>>> help(extraction)\n\nHelp on function extraction in module sne4onnx.onnx_network_extraction:\n\nextraction(\n input_op_names: List[str],\n output_op_names: List[str],\n input_onnx_file_path: Union[str, NoneType] = '',\n onnx_graph: Union[onnx.onnx_ml_pb2.ModelProto, NoneType] = None,\n output_onnx_file_path: Union[str, NoneType] = '',\n non_verbose: Optional[bool] = False\n) -> onnx.onnx_ml_pb2.ModelProto\n\n Parameters\n ----------\n input_op_names: List[str]\n List of OP names to specify for the input layer of the model.\n e.g. ['aaa','bbb','ccc']\n\n output_op_names: List[str]\n List of OP names to specify for the output layer of the model.\n e.g. ['ddd','eee','fff']\n\n input_onnx_file_path: Optional[str]\n Input onnx file path.\n Either input_onnx_file_path or onnx_graph must be specified.\n onnx_graph If specified, ignore input_onnx_file_path and process onnx_graph.\n\n onnx_graph: Optional[onnx.ModelProto]\n onnx.ModelProto.\n Either input_onnx_file_path or onnx_graph must be specified.\n onnx_graph If specified, ignore input_onnx_file_path and process onnx_graph.\n\n output_onnx_file_path: Optional[str]\n Output onnx file path.\n If not specified, .onnx is not output.\n Default: ''\n\n non_verbose: Optional[bool]\n Do not show all information logs. Only error logs are displayed.\n Default: False\n\n Returns\n -------\n extracted_graph: onnx.ModelProto\n Extracted onnx ModelProto\n```\n\n## 4. CLI Execution\n```bash\n$ sne4onnx \\\n--input_onnx_file_path input.onnx \\\n--input_op_names aaa bbb ccc \\\n--output_op_names ddd eee fff \\\n--output_onnx_file_path output.onnx\n```\n\n## 5. In-script Execution\n### 5-1. Use ONNX files\n```python\nfrom sne4onnx import extraction\n\nextracted_graph = extraction(\n input_op_names=['aaa','bbb','ccc'],\n output_op_names=['ddd','eee','fff'],\n input_onnx_file_path='input.onnx',\n output_onnx_file_path='output.onnx',\n)\n```\n### 5-2. Use onnx.ModelProto\n```python\nfrom sne4onnx import extraction\n\nextracted_graph = extraction(\n input_op_names=['aaa','bbb','ccc'],\n output_op_names=['ddd','eee','fff'],\n onnx_graph=graph,\n output_onnx_file_path='output.onnx',\n)\n```\n\n## 6. Samples\n### 6-1. Pre-extraction\n\n\n\n\n### 6-2. Extraction\n```bash\n$ sne4onnx \\\n--input_onnx_file_path hitnet_sf_finalpass_720x1280.onnx \\\n--input_op_names 0 1 \\\n--output_op_names 497 785 \\\n--output_onnx_file_path hitnet_sf_finalpass_720x960_head.onnx\n```\n\n### 6-3. Extracted\n\n\n\n\n## 7. Reference\n1. https://github.com/onnx/onnx/blob/main/docs/PythonAPIOverview.md\n2. https://docs.nvidia.com/deeplearning/tensorrt/onnx-graphsurgeon/docs/index.html\n3. https://github.com/NVIDIA/TensorRT/tree/main/tools/onnx-graphsurgeon\n4. https://github.com/PINTO0309/snd4onnx\n5. https://github.com/PINTO0309/scs4onnx\n6. https://github.com/PINTO0309/snc4onnx\n7. https://github.com/PINTO0309/sog4onnx\n8. https://github.com/PINTO0309/PINTO_model_zoo\n\n## 8. Issues\nhttps://github.com/PINTO0309/simple-onnx-processing-tools/issues\n",

"bugtrack_url": null,

"license": "MIT License",

"summary": "A very simple tool for situations where optimization with onnx-simplifier would exceed the Protocol Buffers upper file size limit of 2GB, or simply to separate onnx files to any size you want. Simple Network Extraction for ONNX.",

"version": "1.0.12",

"project_urls": {

"Homepage": "https://github.com/PINTO0309/sne4onnx"

},

"split_keywords": [],

"urls": [

{

"comment_text": "",

"digests": {

"blake2b_256": "49d0336805ac5d1e81f65f781d2b4cba5a0851271d4101b2aca409766ee78980",

"md5": "b47573f2b85c99a188fc0ccc156e0437",

"sha256": "8878074b9c1fe375e3be8c11c4a59975c1438f1045f88c6bdb78f7f64a8ae65c"

},

"downloads": -1,

"filename": "sne4onnx-1.0.12-py3-none-any.whl",

"has_sig": false,

"md5_digest": "b47573f2b85c99a188fc0ccc156e0437",

"packagetype": "bdist_wheel",

"python_version": "py3",

"requires_python": ">=3.6",

"size": 7081,

"upload_time": "2024-04-30T05:10:54",

"upload_time_iso_8601": "2024-04-30T05:10:54.159399Z",

"url": "https://files.pythonhosted.org/packages/49/d0/336805ac5d1e81f65f781d2b4cba5a0851271d4101b2aca409766ee78980/sne4onnx-1.0.12-py3-none-any.whl",

"yanked": false,

"yanked_reason": null

},

{

"comment_text": "",

"digests": {

"blake2b_256": "be101ba8adb8aacd360f9bacc0d4425ed90803af78b35abd535e54991dc0af91",

"md5": "e1eb6735931eecc6d7457e888a2a49c2",

"sha256": "7f3764457f53caabbe0c2d49456d96828e93affe9dfa30fc8cbb2d6f93eadf6c"

},

"downloads": -1,

"filename": "sne4onnx-1.0.12.tar.gz",

"has_sig": false,

"md5_digest": "e1eb6735931eecc6d7457e888a2a49c2",

"packagetype": "sdist",

"python_version": "source",

"requires_python": ">=3.6",

"size": 6350,

"upload_time": "2024-04-30T05:10:55",

"upload_time_iso_8601": "2024-04-30T05:10:55.647549Z",

"url": "https://files.pythonhosted.org/packages/be/10/1ba8adb8aacd360f9bacc0d4425ed90803af78b35abd535e54991dc0af91/sne4onnx-1.0.12.tar.gz",

"yanked": false,

"yanked_reason": null

}

],

"upload_time": "2024-04-30 05:10:55",

"github": true,

"gitlab": false,

"bitbucket": false,

"codeberg": false,

"github_user": "PINTO0309",

"github_project": "sne4onnx",

"travis_ci": false,

"coveralls": false,

"github_actions": true,

"lcname": "sne4onnx"

}