# Whisper

[[Blog]](https://openai.com/blog/whisper)

[[Paper]](https://arxiv.org/abs/2212.04356)

[[Model card]](https://github.com/openai/whisper/blob/main/model-card.md)

[[Colab example]](https://colab.research.google.com/github/openai/whisper/blob/master/notebooks/LibriSpeech.ipynb)

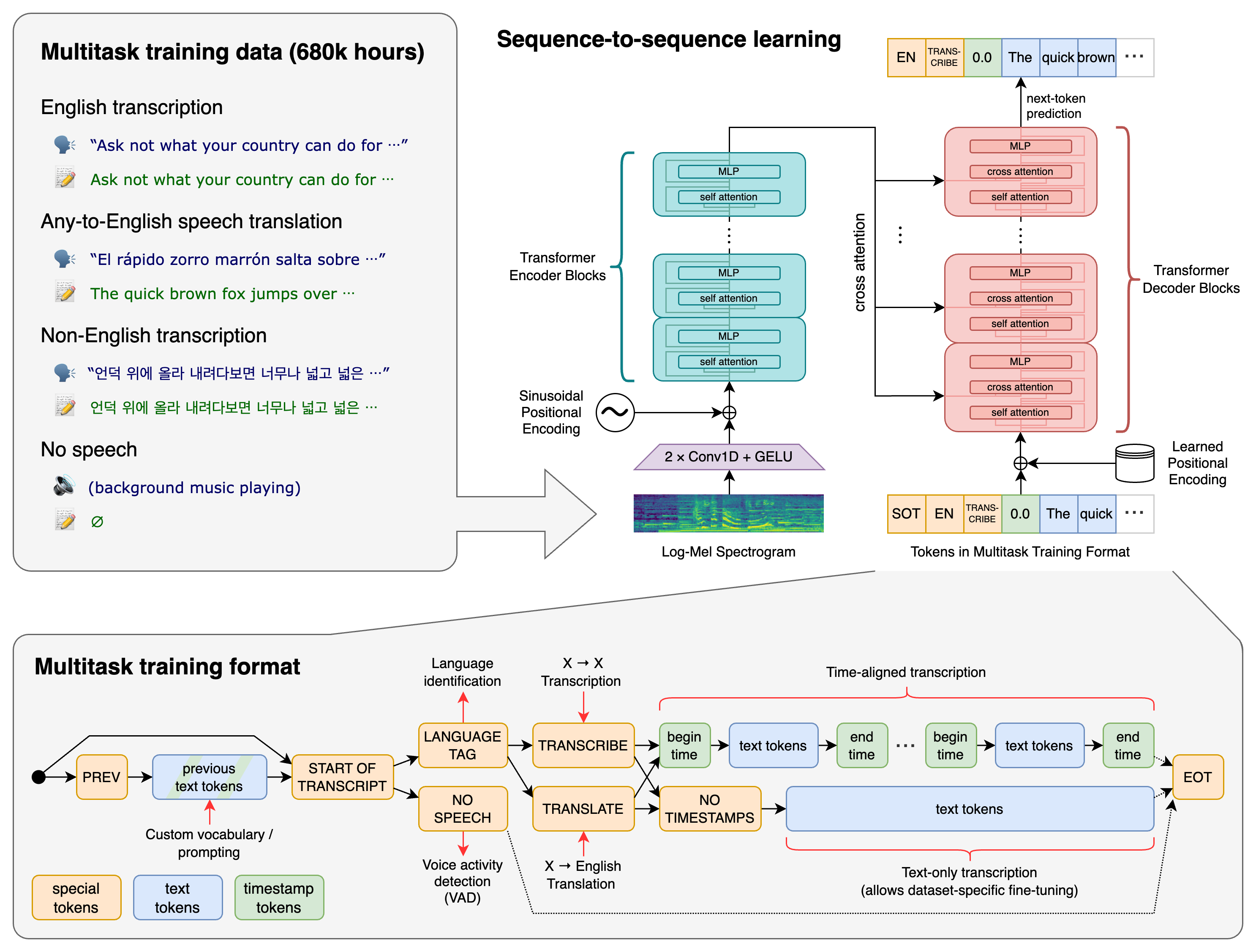

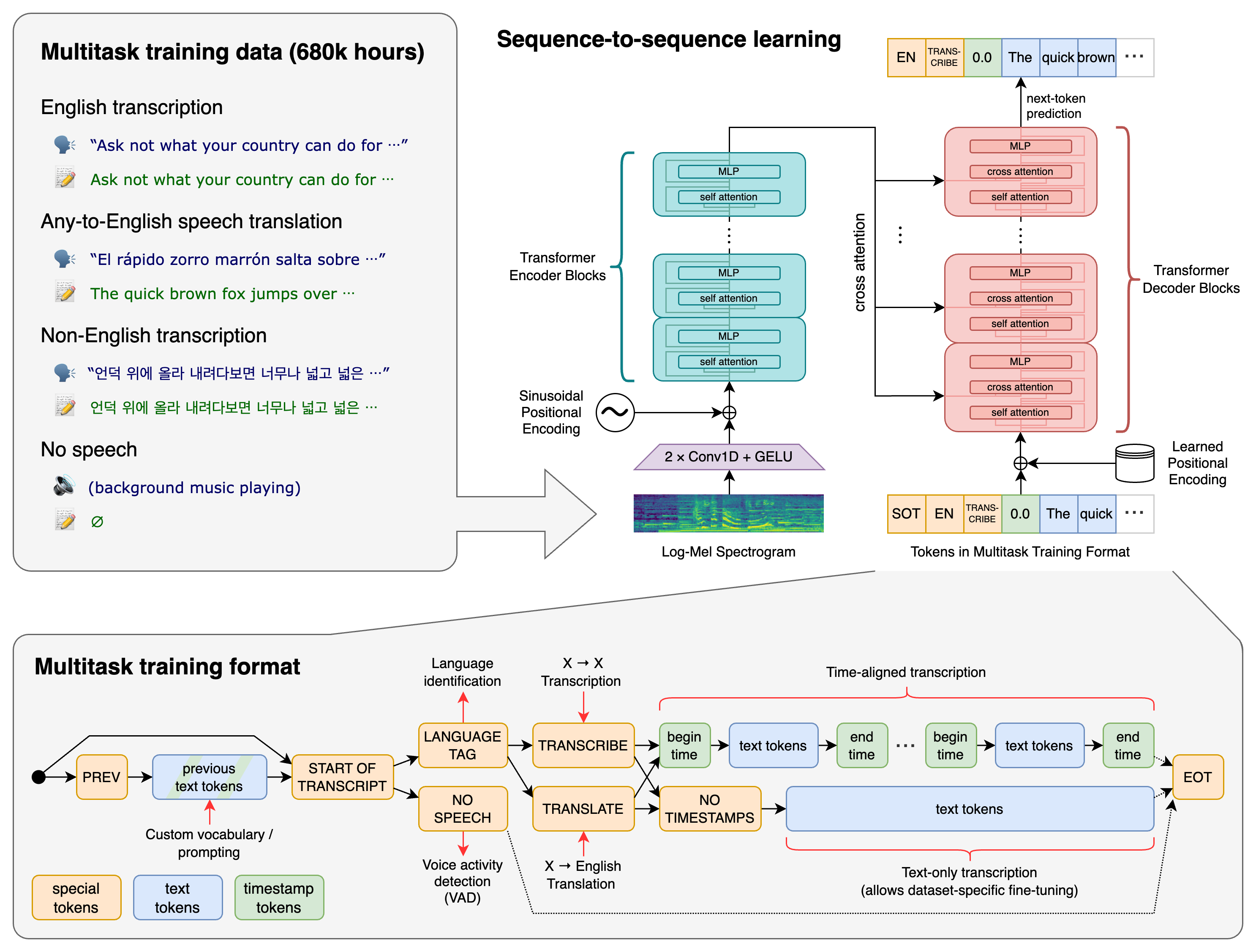

Whisper is a general-purpose speech recognition model. It is trained on a large dataset of diverse audio and is also a multitasking model that can perform multilingual speech recognition, speech translation, and language identification.

## Approach

A Transformer sequence-to-sequence model is trained on various speech processing tasks, including multilingual speech recognition, speech translation, spoken language identification, and voice activity detection. These tasks are jointly represented as a sequence of tokens to be predicted by the decoder, allowing a single model to replace many stages of a traditional speech-processing pipeline. The multitask training format uses a set of special tokens that serve as task specifiers or classification targets.

## Setup

We used Python 3.9.9 and [PyTorch](https://pytorch.org/) 1.10.1 to train and test our models, but the codebase is expected to be compatible with Python 3.8-3.11 and recent PyTorch versions. The codebase also depends on a few Python packages, most notably [OpenAI's tiktoken](https://github.com/openai/tiktoken) for their fast tokenizer implementation. You can download and install (or update to) the latest release of Whisper with the following command:

pip install -U openai-whisper

Alternatively, the following command will pull and install the latest commit from this repository, along with its Python dependencies:

pip install git+https://github.com/openai/whisper.git

To update the package to the latest version of this repository, please run:

pip install --upgrade --no-deps --force-reinstall git+https://github.com/openai/whisper.git

It also requires the command-line tool [`ffmpeg`](https://ffmpeg.org/) to be installed on your system, which is available from most package managers:

```bash

# on Ubuntu or Debian

sudo apt update && sudo apt install ffmpeg

# on Arch Linux

sudo pacman -S ffmpeg

# on MacOS using Homebrew (https://brew.sh/)

brew install ffmpeg

# on Windows using Chocolatey (https://chocolatey.org/)

choco install ffmpeg

# on Windows using Scoop (https://scoop.sh/)

scoop install ffmpeg

```

You may need [`rust`](http://rust-lang.org) installed as well, in case [tiktoken](https://github.com/openai/tiktoken) does not provide a pre-built wheel for your platform. If you see installation errors during the `pip install` command above, please follow the [Getting started page](https://www.rust-lang.org/learn/get-started) to install Rust development environment. Additionally, you may need to configure the `PATH` environment variable, e.g. `export PATH="$HOME/.cargo/bin:$PATH"`. If the installation fails with `No module named 'setuptools_rust'`, you need to install `setuptools_rust`, e.g. by running:

```bash

pip install setuptools-rust

```

## Available models and languages

There are five model sizes, four with English-only versions, offering speed and accuracy tradeoffs. Below are the names of the available models and their approximate memory requirements and inference speed relative to the large model; actual speed may vary depending on many factors including the available hardware.

| Size | Parameters | English-only model | Multilingual model | Required VRAM | Relative speed |

|:------:|:----------:|:------------------:|:------------------:|:-------------:|:--------------:|

| tiny | 39 M | `tiny.en` | `tiny` | ~1 GB | ~32x |

| base | 74 M | `base.en` | `base` | ~1 GB | ~16x |

| small | 244 M | `small.en` | `small` | ~2 GB | ~6x |

| medium | 769 M | `medium.en` | `medium` | ~5 GB | ~2x |

| large | 1550 M | N/A | `large` | ~10 GB | 1x |

The `.en` models for English-only applications tend to perform better, especially for the `tiny.en` and `base.en` models. We observed that the difference becomes less significant for the `small.en` and `medium.en` models.

Whisper's performance varies widely depending on the language. The figure below shows a performance breakdown of `large-v3` and `large-v2` models by language, using WERs (word error rates) or CER (character error rates, shown in *Italic*) evaluated on the Common Voice 15 and Fleurs datasets. Additional WER/CER metrics corresponding to the other models and datasets can be found in Appendix D.1, D.2, and D.4 of [the paper](https://arxiv.org/abs/2212.04356), as well as the BLEU (Bilingual Evaluation Understudy) scores for translation in Appendix D.3.

## Command-line usage

The following command will transcribe speech in audio files, using the `medium` model:

whisper audio.flac audio.mp3 audio.wav --model medium

The default setting (which selects the `small` model) works well for transcribing English. To transcribe an audio file containing non-English speech, you can specify the language using the `--language` option:

whisper japanese.wav --language Japanese

Adding `--task translate` will translate the speech into English:

whisper japanese.wav --language Japanese --task translate

Run the following to view all available options:

whisper --help

See [tokenizer.py](https://github.com/openai/whisper/blob/main/whisper/tokenizer.py) for the list of all available languages.

## Python usage

Transcription can also be performed within Python:

```python

import whisper

model = whisper.load_model("base")

result = model.transcribe("audio.mp3")

print(result["text"])

```

Internally, the `transcribe()` method reads the entire file and processes the audio with a sliding 30-second window, performing autoregressive sequence-to-sequence predictions on each window.

Below is an example usage of `whisper.detect_language()` and `whisper.decode()` which provide lower-level access to the model.

```python

import whisper

model = whisper.load_model("base")

# load audio and pad/trim it to fit 30 seconds

audio = whisper.load_audio("audio.mp3")

audio = whisper.pad_or_trim(audio)

# make log-Mel spectrogram and move to the same device as the model

mel = whisper.log_mel_spectrogram(audio).to(model.device)

# detect the spoken language

_, probs = model.detect_language(mel)

print(f"Detected language: {max(probs, key=probs.get)}")

# decode the audio

options = whisper.DecodingOptions()

result = whisper.decode(model, mel, options)

# print the recognized text

print(result.text)

```

## More examples

Please use the [🙌 Show and tell](https://github.com/openai/whisper/discussions/categories/show-and-tell) category in Discussions for sharing more example usages of Whisper and third-party extensions such as web demos, integrations with other tools, ports for different platforms, etc.

## License

Whisper's code and model weights are released under the MIT License. See [LICENSE](https://github.com/openai/whisper/blob/main/LICENSE) for further details.

Raw data

{

"_id": null,

"home_page": "https://github.com/openai/whisper",

"name": "learnbyvideo-whisper",

"maintainer": null,

"docs_url": null,

"requires_python": ">=3.8",

"maintainer_email": null,

"keywords": null,

"author": "OpenAI",

"author_email": null,

"download_url": "https://files.pythonhosted.org/packages/bd/8e/c2b0229bb89b3e557a179e3ce3749966b26e48fabd6b46b5bd5872532fd6/learnbyvideo-whisper-20231117.tar.gz",

"platform": null,

"description": "# Whisper\r\n\r\n[[Blog]](https://openai.com/blog/whisper)\r\n[[Paper]](https://arxiv.org/abs/2212.04356)\r\n[[Model card]](https://github.com/openai/whisper/blob/main/model-card.md)\r\n[[Colab example]](https://colab.research.google.com/github/openai/whisper/blob/master/notebooks/LibriSpeech.ipynb)\r\n\r\nWhisper is a general-purpose speech recognition model. It is trained on a large dataset of diverse audio and is also a multitasking model that can perform multilingual speech recognition, speech translation, and language identification.\r\n\r\n\r\n## Approach\r\n\r\n\r\n\r\nA Transformer sequence-to-sequence model is trained on various speech processing tasks, including multilingual speech recognition, speech translation, spoken language identification, and voice activity detection. These tasks are jointly represented as a sequence of tokens to be predicted by the decoder, allowing a single model to replace many stages of a traditional speech-processing pipeline. The multitask training format uses a set of special tokens that serve as task specifiers or classification targets.\r\n\r\n\r\n## Setup\r\n\r\nWe used Python 3.9.9 and [PyTorch](https://pytorch.org/) 1.10.1 to train and test our models, but the codebase is expected to be compatible with Python 3.8-3.11 and recent PyTorch versions. The codebase also depends on a few Python packages, most notably [OpenAI's tiktoken](https://github.com/openai/tiktoken) for their fast tokenizer implementation. You can download and install (or update to) the latest release of Whisper with the following command:\r\n\r\n pip install -U openai-whisper\r\n\r\nAlternatively, the following command will pull and install the latest commit from this repository, along with its Python dependencies:\r\n\r\n pip install git+https://github.com/openai/whisper.git \r\n\r\nTo update the package to the latest version of this repository, please run:\r\n\r\n pip install --upgrade --no-deps --force-reinstall git+https://github.com/openai/whisper.git\r\n\r\nIt also requires the command-line tool [`ffmpeg`](https://ffmpeg.org/) to be installed on your system, which is available from most package managers:\r\n\r\n```bash\r\n# on Ubuntu or Debian\r\nsudo apt update && sudo apt install ffmpeg\r\n\r\n# on Arch Linux\r\nsudo pacman -S ffmpeg\r\n\r\n# on MacOS using Homebrew (https://brew.sh/)\r\nbrew install ffmpeg\r\n\r\n# on Windows using Chocolatey (https://chocolatey.org/)\r\nchoco install ffmpeg\r\n\r\n# on Windows using Scoop (https://scoop.sh/)\r\nscoop install ffmpeg\r\n```\r\n\r\nYou may need [`rust`](http://rust-lang.org) installed as well, in case [tiktoken](https://github.com/openai/tiktoken) does not provide a pre-built wheel for your platform. If you see installation errors during the `pip install` command above, please follow the [Getting started page](https://www.rust-lang.org/learn/get-started) to install Rust development environment. Additionally, you may need to configure the `PATH` environment variable, e.g. `export PATH=\"$HOME/.cargo/bin:$PATH\"`. If the installation fails with `No module named 'setuptools_rust'`, you need to install `setuptools_rust`, e.g. by running:\r\n\r\n```bash\r\npip install setuptools-rust\r\n```\r\n\r\n\r\n## Available models and languages\r\n\r\nThere are five model sizes, four with English-only versions, offering speed and accuracy tradeoffs. Below are the names of the available models and their approximate memory requirements and inference speed relative to the large model; actual speed may vary depending on many factors including the available hardware.\r\n\r\n| Size | Parameters | English-only model | Multilingual model | Required VRAM | Relative speed |\r\n|:------:|:----------:|:------------------:|:------------------:|:-------------:|:--------------:|\r\n| tiny | 39 M | `tiny.en` | `tiny` | ~1 GB | ~32x |\r\n| base | 74 M | `base.en` | `base` | ~1 GB | ~16x |\r\n| small | 244 M | `small.en` | `small` | ~2 GB | ~6x |\r\n| medium | 769 M | `medium.en` | `medium` | ~5 GB | ~2x |\r\n| large | 1550 M | N/A | `large` | ~10 GB | 1x |\r\n\r\nThe `.en` models for English-only applications tend to perform better, especially for the `tiny.en` and `base.en` models. We observed that the difference becomes less significant for the `small.en` and `medium.en` models.\r\n\r\nWhisper's performance varies widely depending on the language. The figure below shows a performance breakdown of `large-v3` and `large-v2` models by language, using WERs (word error rates) or CER (character error rates, shown in *Italic*) evaluated on the Common Voice 15 and Fleurs datasets. Additional WER/CER metrics corresponding to the other models and datasets can be found in Appendix D.1, D.2, and D.4 of [the paper](https://arxiv.org/abs/2212.04356), as well as the BLEU (Bilingual Evaluation Understudy) scores for translation in Appendix D.3.\r\n\r\n\r\n\r\n\r\n\r\n## Command-line usage\r\n\r\nThe following command will transcribe speech in audio files, using the `medium` model:\r\n\r\n whisper audio.flac audio.mp3 audio.wav --model medium\r\n\r\nThe default setting (which selects the `small` model) works well for transcribing English. To transcribe an audio file containing non-English speech, you can specify the language using the `--language` option:\r\n\r\n whisper japanese.wav --language Japanese\r\n\r\nAdding `--task translate` will translate the speech into English:\r\n\r\n whisper japanese.wav --language Japanese --task translate\r\n\r\nRun the following to view all available options:\r\n\r\n whisper --help\r\n\r\nSee [tokenizer.py](https://github.com/openai/whisper/blob/main/whisper/tokenizer.py) for the list of all available languages.\r\n\r\n\r\n## Python usage\r\n\r\nTranscription can also be performed within Python: \r\n\r\n```python\r\nimport whisper\r\n\r\nmodel = whisper.load_model(\"base\")\r\nresult = model.transcribe(\"audio.mp3\")\r\nprint(result[\"text\"])\r\n```\r\n\r\nInternally, the `transcribe()` method reads the entire file and processes the audio with a sliding 30-second window, performing autoregressive sequence-to-sequence predictions on each window.\r\n\r\nBelow is an example usage of `whisper.detect_language()` and `whisper.decode()` which provide lower-level access to the model.\r\n\r\n```python\r\nimport whisper\r\n\r\nmodel = whisper.load_model(\"base\")\r\n\r\n# load audio and pad/trim it to fit 30 seconds\r\naudio = whisper.load_audio(\"audio.mp3\")\r\naudio = whisper.pad_or_trim(audio)\r\n\r\n# make log-Mel spectrogram and move to the same device as the model\r\nmel = whisper.log_mel_spectrogram(audio).to(model.device)\r\n\r\n# detect the spoken language\r\n_, probs = model.detect_language(mel)\r\nprint(f\"Detected language: {max(probs, key=probs.get)}\")\r\n\r\n# decode the audio\r\noptions = whisper.DecodingOptions()\r\nresult = whisper.decode(model, mel, options)\r\n\r\n# print the recognized text\r\nprint(result.text)\r\n```\r\n\r\n## More examples\r\n\r\nPlease use the [\ud83d\ude4c Show and tell](https://github.com/openai/whisper/discussions/categories/show-and-tell) category in Discussions for sharing more example usages of Whisper and third-party extensions such as web demos, integrations with other tools, ports for different platforms, etc.\r\n\r\n\r\n## License\r\n\r\nWhisper's code and model weights are released under the MIT License. See [LICENSE](https://github.com/openai/whisper/blob/main/LICENSE) for further details.\r\n",

"bugtrack_url": null,

"license": "MIT",

"summary": "Robust Speech Recognition via Large-Scale Weak Supervision",

"version": "20231117",

"project_urls": {

"Homepage": "https://github.com/openai/whisper"

},

"split_keywords": [],

"urls": [

{

"comment_text": "",

"digests": {

"blake2b_256": "77f226b8b406f2273d72238d0f1fb087a6ebbb2ffaea0a9e64a88d8df13b4a39",

"md5": "02f14828ff6303f18f7130b89e3551c2",

"sha256": "5686e61e6102ea88bb14ac778e5084d32d59f7b60cc91c256ffba459f638c469"

},

"downloads": -1,

"filename": "learnbyvideo_whisper-20231117-py3-none-any.whl",

"has_sig": false,

"md5_digest": "02f14828ff6303f18f7130b89e3551c2",

"packagetype": "bdist_wheel",

"python_version": "py3",

"requires_python": ">=3.8",

"size": 812246,

"upload_time": "2024-03-24T18:33:10",

"upload_time_iso_8601": "2024-03-24T18:33:10.394982Z",

"url": "https://files.pythonhosted.org/packages/77/f2/26b8b406f2273d72238d0f1fb087a6ebbb2ffaea0a9e64a88d8df13b4a39/learnbyvideo_whisper-20231117-py3-none-any.whl",

"yanked": false,

"yanked_reason": null

},

{

"comment_text": "",

"digests": {

"blake2b_256": "bd8ec2b0229bb89b3e557a179e3ce3749966b26e48fabd6b46b5bd5872532fd6",

"md5": "25376e79adbbda50485890b9d807405a",

"sha256": "09a90454a124ad1b6f77506e672311238909a21ad12d571e8881c74028206a34"

},

"downloads": -1,

"filename": "learnbyvideo-whisper-20231117.tar.gz",

"has_sig": false,

"md5_digest": "25376e79adbbda50485890b9d807405a",

"packagetype": "sdist",

"python_version": "source",

"requires_python": ">=3.8",

"size": 807993,

"upload_time": "2024-03-24T18:33:12",

"upload_time_iso_8601": "2024-03-24T18:33:12.890372Z",

"url": "https://files.pythonhosted.org/packages/bd/8e/c2b0229bb89b3e557a179e3ce3749966b26e48fabd6b46b5bd5872532fd6/learnbyvideo-whisper-20231117.tar.gz",

"yanked": false,

"yanked_reason": null

}

],

"upload_time": "2024-03-24 18:33:12",

"github": true,

"gitlab": false,

"bitbucket": false,

"codeberg": false,

"github_user": "openai",

"github_project": "whisper",

"travis_ci": false,

"coveralls": false,

"github_actions": true,

"requirements": [

{

"name": "numba",

"specs": []

},

{

"name": "numpy",

"specs": []

},

{

"name": "torch",

"specs": []

},

{

"name": "tqdm",

"specs": []

},

{

"name": "more-itertools",

"specs": []

},

{

"name": "tiktoken",

"specs": []

},

{

"name": "triton",

"specs": [

[

"<",

"3"

],

[

">=",

"2.0.0"

]

]

}

],

"lcname": "learnbyvideo-whisper"

}